| [[WM:TECHBLOG]] |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| All Things Linguistic |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Andy Mabbett, aka pigsonthewing. |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Anna writes |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| BaChOuNdA |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Bawolff's rants |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Between the Brackets: a MediaWiki Podcast |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Blog on Taavi Väänänen |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Blogs on Santhosh Thottingal |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Bookcrafting Guru |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| brionv |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Catching Flies |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Clouds & Unicorns |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Cogito, Ergo Sumana tag: Wikimedia |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Cometstyles.com |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Commonists |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Content Translation Update |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| cookies & code |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Damian's Dev Blog |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Design at Wikipedia |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| dialogicality |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Diff |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Doing the needful |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Durova |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Ed's Blog |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Einstein University |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Endami |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| English Wikipedia administrators' newsletter |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Fae |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Federico Leva |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| FOSS – Small Town Tech |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Gap-finding Project |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Geni's Wikipedia Blog |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| http://ad.huikeshoven.org/feeds/posts/default/-/wiki |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| http://blog.maudite.cc/comments/feed |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| http://blog.pediapress.com/feeds/posts/default/-/wiki |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| http://blog.robinpepermans.be/feeds/posts/default/-/PlanetWM |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| http://bluerasberry.com/feed/ |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| http://brianna.modernthings.org/atom/?section=article |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| http://feeds.feedburner.com/ThoughtsForDeletion^ |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| http://magnusmanske.de/wordpress/?feed=rss2 |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| http://moriel.smarterthanthat.com/tag/mediawiki/feed/ |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| http://terrychay.com/category/work/wikimedia/feed |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| http://wikipediaweekly.org/feed/podcast |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| http://www.greenman.co.za/blog/?tag=wikimedia&feed=rss2 |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| http://www.phoebeayers.info/phlog/?cat=10&feed=rss2 |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| https://addshore.com/feed/atom/?tag=mediawiki%2Cwikimedia%2Cwikibase%2Cwikidata%2Cwikipedia%2Cwikidatacon |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| https://blog.ash.bzh/en/feed/ |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| https://blog.bluespice.com/tag/mediawiki/feed/ |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| https://blog.kevinpayravi.com/tag/wikimedia/feed/ |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| https://blog.wikimedia.de/tag/Wikidata+English/feed/ |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| https://hexmode.com/category/wmf/feed/atom/ |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| https://logic10.tumblr.com/ |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| https://lu.is/wikimedia/feed/ |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| https://mariapacana.tumblr.com/tagged/parsoid/rss |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| https://medium.com/feed/@nehajha |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| https://thewikipedian.net/feed/ |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| https://tttwrites.wordpress.com/category/wikimedia/feed/ |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| https://wandacode.com/category/outreachy-internship/feed/ |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| https://wikistrategies.net/category/wiki/feed/atom/ |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| https://wllm.com/tag/wikipedia/feed/ |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| https://www.guillaumepaumier.com/category/wikimedia/feed/ |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| https://www.residentmar.io/feed |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| https://www.wikiphotographer.net/category/wikimedia-commons/feed/ |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| in English Archives - Wikimedia Suomi |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| International Wikitrekk |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Language and Translation |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Laura Hale, Wikinews reporter |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Leave it to the prose |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Make love, not traffic. |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Mark Rauterkus & Running Mates ponder current events |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| MediaWiki and Wikimedia – etc. etc. |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| MediaWiki Testing |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| MediaWiki – Chris Koerner |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| mediawiki – Hexmode's Weblog |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| MediaWiki – It rains like a saavi |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| MediaWiki – Ryan D Lane |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Ministry of Wiki Affairs |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Muddyb Mwanaharakati |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Musings of Majorly |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| My Outreachy 2017 @ Wikimedia Foundation |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| NonNotableNatterings |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Notes from the Bleeding Edge |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Nothing three |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Okinovo okýnko |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Open Codex |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Open Source Exile |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Original Research |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Pablo Garuda |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Pau Giner |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Personal – The Moon on a Stick |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Phabricating Phabricator |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Planet Wikimedia Archives - Entropy Wins |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Planet Wikimedia – OpenMeetings.org | Announcements |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| planetwikimedia – copyrighteous |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Political Bias on Wikipedia |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Posts (#wikimedia) |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Professional Wiki Blog |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| project-green-smw |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| ProWiki Blog |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Ramblings by Paolo on Web2.0, Wikipedia, Social Networking, Trust,

Reputation, … |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Rock drum |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Routing knowledge |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Sam Wilson's notebook |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Sam Wilson: Wikimedia |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Sammy's Blog |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Score all the things |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Scripts++ |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Semantic MediaWiki – news |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Sentiments of a Dissident |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Stories by Megha Sharma on Medium |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Sue Gardner's Blog |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Tech News weekly bulletin feed |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Technical & On-topic – Mike Baynton’s Mediawiki Dev Blog |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| The Academic Wikipedian |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| The Lego Mirror - MediaWiki |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| The life of James R. |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| The Signpost |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| The Speed of Thought |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| TheDJ writes |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| This Month in GLAM |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Timo Tijhof |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Ting's Wikimedia Blog |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Tyler Cipriani: blog |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Vinitha's blog |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

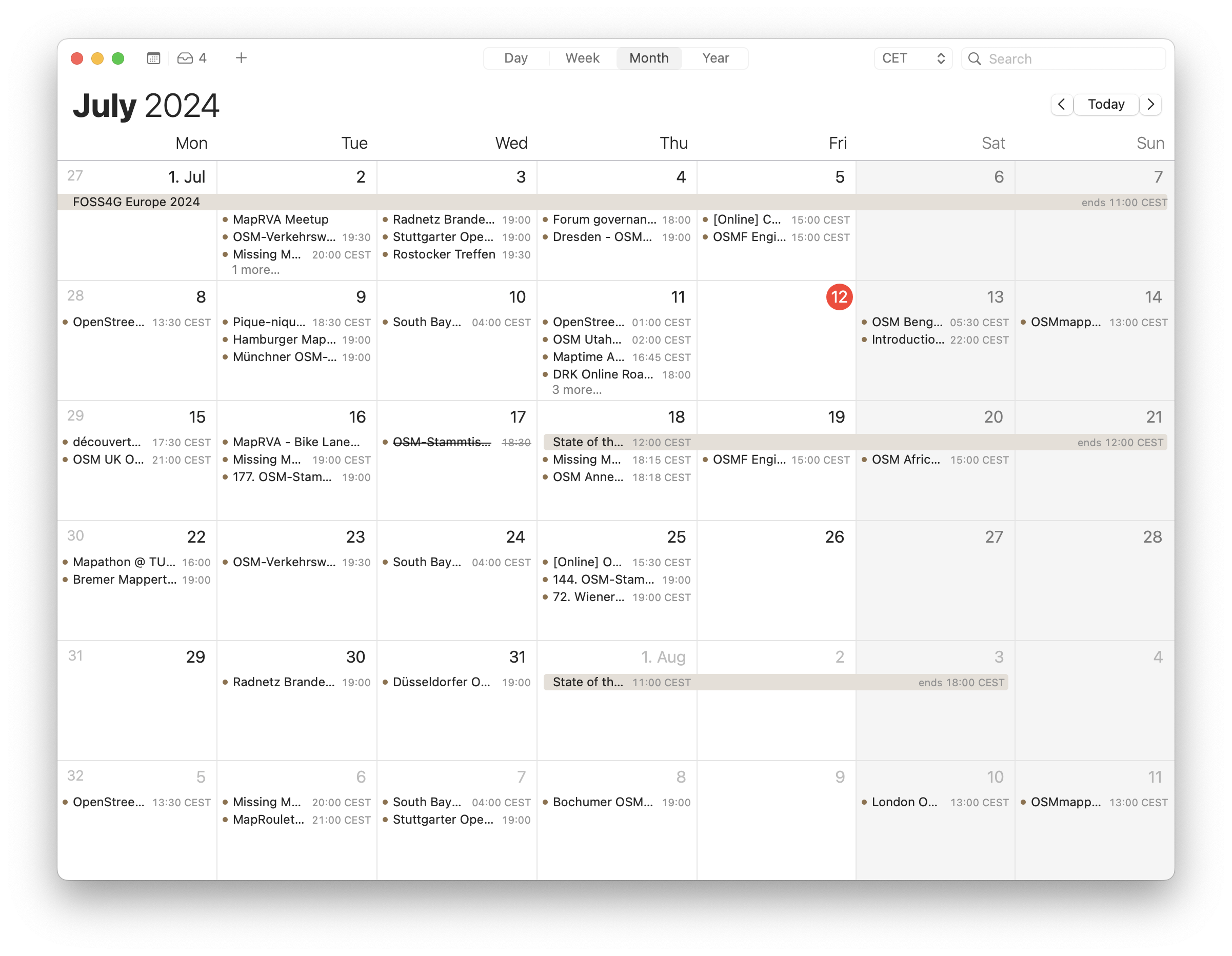

| weekly – semanario – hebdo – 週刊 – týdeník – Wochennotiz – 주간 –

tygodnik |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| What is going on in Europe? |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wiki Education |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wiki Loves Monuments |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wiki Northeast |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wiki Playtime - Medium |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| wiki – David Gerard |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| wiki – Gabriel Pollard |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| wiki – Our new mind |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| wiki – stu.blog |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| wiki – The life on Wikipedia – A Wikignome's perspecive |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| wiki – Ziko's Blog |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| wiki-en – [[content|comment]] |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikibooks News |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikimedia Australia news |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikimedia DC Blog |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikimedia Design Blog |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikimedia Europe |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikimedia Foundation |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| wikimedia on Kosta Harlan |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikimedia Security Team |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikimedia Status - Incident History |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikimedia Tech News |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikimedia | ഗ്രന്ഥപ്പുര |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| wikimedia – andré klapper's blog. |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| wikimedia – apergos' open musings |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| wikimedia – Bitterscotch |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikimedia – DcK Area |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| wikimedia – Harsh Kothari |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikimedia – Jan Ainali |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| wikimedia – millosh’s blog |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| wikimedia – Open World |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| wikimedia – Thomas Dalton |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikimedia – Tim Starling's blog |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikimedia – Witty's Blog |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikinews Reports |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikipedia & Linterweb |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikipedia - nointrigue.com |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikipedia Archives — Andy Mabbett, aka pigsonthewing. |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikipedia Notes from User:Wwwwolf |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikipedia – Aharoni in Unicode |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| wikipedia – Andrew Gray |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikipedia – Blossoming Soul |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikipedia – Bold household |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| wikipedia – Going GNU |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikipedia – mlog |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikipedia – ragesoss |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| wikipedia – The Longest Now |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikipedian in Residence for Gender Equity at West Virginia

University |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| WikiProject Oregon |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikisorcery |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wikistaycation |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| wikitech – domas mituzas |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| WMUK |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Words and what not |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Wow. So wikimedia. Such quality. Many testing. Very team. |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Writing Within the Rules |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| XD @ WP |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| {{Hatnote}} |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

| Ø |

XML |

Saturday, 27 July 2024 02:01 |

Saturday, 27 July 2024 03:01 |

Discuss this story